Briefing to Quantum Computing

[This text was originally published in Viestimies-journal. This version is slightly updated and translated to english.]

This article briefly reviews the nature of quantum computing and explores the opportunities and threats it brings. The perspective focuses on information security and the vision of warfare evolution.

Theoretical Background

The foundation of current digital computers was established in the early 20th century. At that time, as formal mathematics developed, various computational models were created that serve as the basis for modern transistor-based machine architecture. The computer was an unknown concept at the time, and it was more about automating mechanical computation, as well as creating formal mathematics, which in certain respects largely reduces to the former.

Perhaps the most well-known of these formalizations of computation are Markov algorithms, Lambda calculus, and probably the most famous, Turing's Turing machine. It has since been shown that these are all equivalent in terms of expressive power, meaning all can compute the same things. In other words, when building a Turing machine for any purpose, it can also be implemented in Lambda calculus and Markov algorithms, and vice versa.

Often, however, the focus is more on how quickly the desired thing can be computed. Current different models are all similar in the sense that none can offer a substantially faster way to perform computation than others. In practice, all reasonably constructed mechanisms can be reduced to other equivalent ones at most with so-called polynomial-time reduction, i.e., without significant slowdown (or speedup).

Quantum computers, however, are challenging this situation in an unprecedented way.

Quantum Computers

In a traditional computer all computation ultimately reduces to tiny bit-level operations that modern processors churn through at incomprehensible speed. Computer processors, i.e., the computational engine, and memory are built to perform very simple operations. In their reality, a single bit is either 1 or 0, and the result of each calculation is bits/bytes that are completely determined by the inputs.

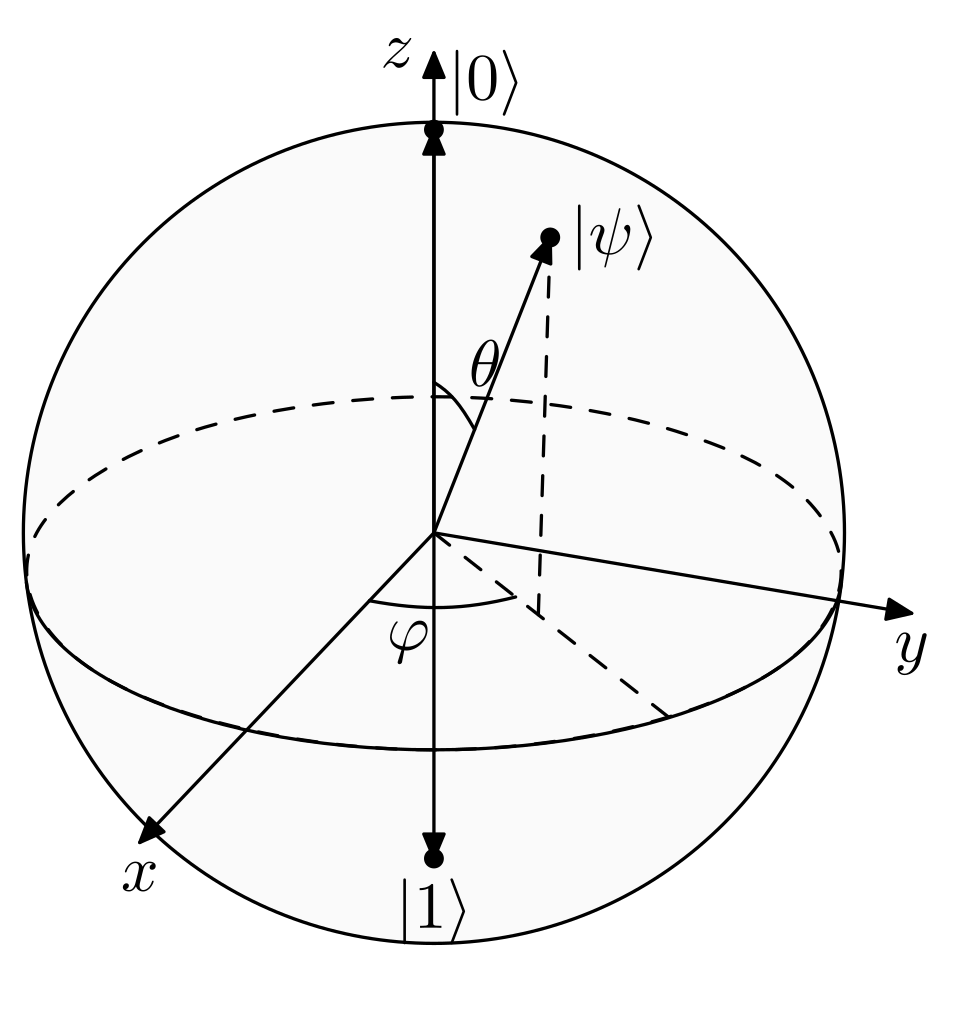

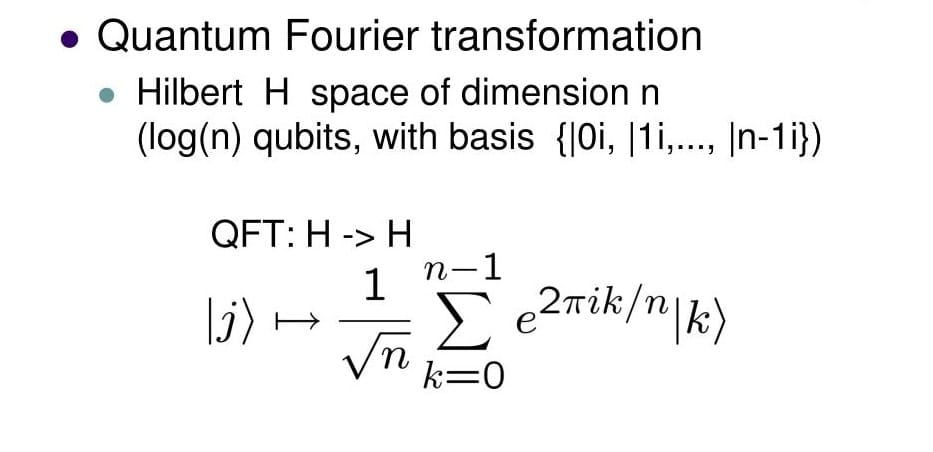

In quantum computers, the bit is replaced by the concept of a qubit. While a traditional bit is either 1 or 0 and nothing else, a qubit can be 1 or 0 or a superposition of them. This superposition can be thought of as a certain probability of whether the qubit is read as one or zero. What is special is that the superposition of a single qubit can immediately affect the superposition of another qubit. The underlying physics is therefore very different from what we are used to in the traditional computer world, and the formalization of computation requires a new approach. For example, modeling a qubit requires very different tools instead of traditional 0-1 thinking, as exemplified in figure below.

Simplifying the whole, it can be thought that the efficiency of quantum computers largely arises from their ability to perform computation in a certain sense in parallel through the mentioned interactions. If we think of a traditional computer, anyone who has done programming knows the concept of a process and how a process is always fundamentally run on one processor. Execution is purely sequential, i.e., queue-like, and from one state you move through computation to another state. The internal world of quantum computers is different, and simplifying, they can be thought of as computing multiple states "simultaneously". This capability brings speed advantages.

On The Time Complexity of Algorithms

It appears that quantum computers will, at least initially, be superior with problems that have a clear, moderately sized input and a calculated answer from it, where the computation takes time, i.e., the problem to be computed is difficult.

To grasp concrete cases, we must define the "difficulty" of computation. In computational complexity theory, a framework has been created that can quite well evaluate what kinds of problems are difficult for computers to solve, i.e., which problems take a lot of computational time.

Computation is thought of as a model where a computer receives a number and outputs a number as an answer. The machine's (actually the algorithm's) computation is monitored for the number of calculations relative to the input length. Not the value, but the length of the string, i.e., the number. With this definition, problems can be classified into different complexity classes.

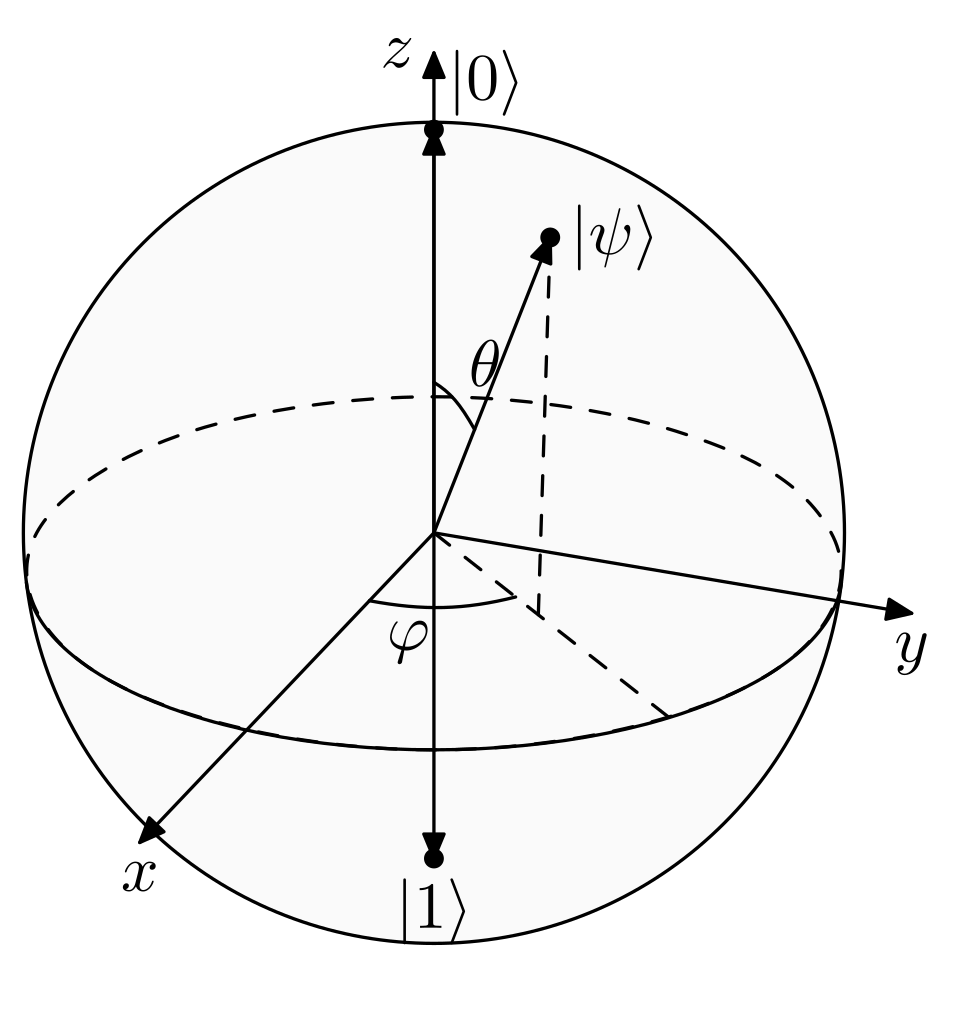

In this context, it is sufficient to know that if a problem can be solved with traditional computational methods (Notice; in the quantum world, the definitions are different, but let's not let that bother us.) in time that is polynomial relative to the input length, then it is practically computable. In this case, the problem's time complexity class is said to be P. If a problem could be solved in linear time (i.e., really quickly), it would belong to the LIN class. Since problems solvable in linear time are solved faster than in polynomial time, it is noted that every LIN class problem is actually also in class P and correspondingly in all other classes that are more difficult to compute than the LIN class.

Often it is relatively straightforward to find the time complexity class in which a problem lies. The challenge, however, is that it is difficult to prove that it couldn't be solved faster. The challenge follows from the definition itself, because time complexity is defined for the algorithm that solves the problem, not for the problem itself. So if you want to show that a faster implementation doesn't exist, you have to delve very deeply into the nature of the problem itself.

This situation becomes uncomfortably strong in the case of the time complexity class NP. NP roughly consists of problems where the correctness of an existing answer candidate can be verified in polynomial time, but finding that candidate has no known polynomial method; rather, the algorithms are practically exponential in time complexity. Finding a solution is therefore difficult. From the definitions directly, it is known that P belongs to NP, but one of the hot potatoes has been for decades whether P=NP.

The NP class is interesting because the current understanding is that fast algorithms cannot be developed for problems belonging to it with current computers. However, no one has been able to prove the matter one way or the other. NP contains many problems for which a fast algorithm would be desired, but this has not been successful.

Quantum Computer Performance

The strength of quantum computers lies in their ability to explore many possibilities simultaneously, making them much faster for certain specific problems sometimes with almost exponentially faster than current algorithms.

The situation is delicious because this would enable solving many practical problems quickly. One general example is the so-called traveling salesman problem, where the aim is to find the fastest route for a traveling salesman in a given environment. The problem (appropriately formulated) is a famous NP-complete problem and it generalizes quite straightforwardly to, for example, optimizing logistics chains.

Another everyday example is arranging a lecture calendar so that students have as few conflicts as possible with given preconditions. This also generalizes quite straightforwardly to other similar optimization problems.

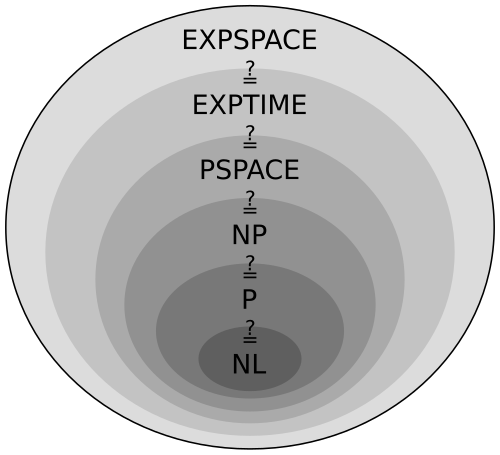

The increase in performance also brings problems. For example, the security of many encryption algorithms is based on the assumption that the underlying algorithms are such that they cannot be broken in polynomial time. More concretely, many encryption algorithms are based on the assumption that, for example, the discrete logarithm or integer factorization cannot be calculated in polynomial time, i.e., efficiently with large inputs. This assumption has held for decades, but with quantum computers, the situation may change.

However, various communities are alert, and for example, in the spring 2023 NATO ACT TIDE sprint , among others, the DCS-track (Data Centric Security) considered when to start requiring quantum-resistant algorithms. Algorithms themselves are also being developed for several purposes. More information can be found with the search terms post-quantum cryptography.

In the United States, NIST published in fall 2022, after a six-year competition, its chosen algorithms on which quantum-resistant encryption is being developed. CRYSTALS-Kyber was selected as the general-purpose algorithm, and for digital signing, the trio CRYSTALS-Dilithium, FALCON, and SPHINCS+. The algorithms are fundamentally very different from current ones, and this work is partly ongoing. The topic is the subject of intense research.

The standards for the implementations were published in August 2024.

NIST has also published a table (NIST Internal Report (NISTIR) 8105, Report on Post-Quantum Cryptography) that assesses the fate of various cryptographic algorithms if quantum computing becomes possible.

Relation to Traditional Computing Models

It is also good to be aware that there are situations where quantum computers are not believed to be challengers to current technologies. One example is processing and analyzing large data masses. This is because transferring information into a quantum computer is, at least currently, also an operation in itself. It would seem, then, that the smaller the input and the more difficult the calculation, the better the benefits of quantum computing become apparent, and vice versa. At least in the early stages of technology development.

A perhaps somewhat surprising direction is that in the development of quantum computing algorithms, it has been necessary to think and model things in a completely new way. This intellectual challenge has brought new insights also to the development of current algorithms. We talk about "quantum-inspired" algorithms, where new ideas for implementation have been obtained by considering implementation in the quantum computing framework.

Quantum Software and Hardware

The first thoughts on quantum computing probably arose around the same time when quantum mechanics and computational theory began to find each other. In the early 1980s, Paul Benioff presented the idea of a mechanical implementation of quantum computing, and slightly later Richard Feynman presented ideas for simulating physics with quantum computers.

Peter Shor presented in 1994 the first concrete quantum computing algorithm for factoring integers, which is known as Shor's algorithm. The algorithm presents the principle of how an integer can be factored, and it offers a significantly faster method than traditional methods, i.e., the efficiency advantage of the quantum world is realized in it. This caused a small stir in scientific circles at the time and gave a boost to the field, but typical of science, development continued slowly without breakthroughs presented to the general public.

With Shor's algorithm, quantum computers would break essentially all current public-key cryptography systems (RSA, ECC, Diffie-Hellman).

Quantum algorithms that are substantially more efficient than traditional algorithms have hardly been published despite intensive research. In addition to the previously mentioned Shor's algorithm, Grover's algorithm, a search algorithm published in 1996, is one of the relatively few that offers a genuine speed advantage. Although even its benefit is modest and it is not believed to offer a real breakthrough to current difficult problems or, for example, encryption systems, because the speedup is limited (one can say the algorithm halves the search space).

In recent years, hardware development seems to have taken quite large steps. Examples include Google's 72-qubit Bristlecone, IBM's 52-qubit IBM Q3, and Rigetti's 80-qubit Aspen-M-3. On the software side, Microsoft has developed the Q# language, which was created for developing quantum algorithms. Alongside these technology giants, it is a pleasure to note that we also have domestic entrepreneurship, such as the recently publicized IQM and the somewhat newer software-side player Quanscient.

Data Encryption

Secure and functional communication is one of the basic pillars of warfare. The message must not only have the correct content but also reach the right recipient securely. One essential factor of security is encrypting the message at the transport layer. With quantum computing, replacing transport layer encryption algorithms with quantum-resistant ones will become relevant sooner or later. Current development with quantum-resistant algorithms fortunately quite certainly provides solutions to this. Since encryption solutions are often quite transparent to the end user, it is expected that competent administration will independently take care of appropriately updating the technology to be sustainable.

However, communication encryption is a special use case in the sense that the security classification of a message's content can and often does decrease quite quickly over time. Today's secret operation start information may become public the moment the start orders have been distributed. This means that communication encryption must be such that decrypting it in real-time is not possible, and on the other hand, the traffic itself is generally such that decrypting it with delay no longer adds value.

However, the situation is completely different with data whose security classification is high for a considerably longer time period. Material encrypted today with the best possible means may be quite easily opened with the advent of quantum age. If the information is such that it has a high security classification in the future as well, then encrypting it today with an algorithm that works does not necessarily ensure the durability of its encryption tomorrow.

The situation has led to the fact that there are parties collecting encrypted data that cannot currently be decrypted. The idea is that technology development is so fast that soon the encryption will be decryptable. This phenomenon is called in many contexts the "Harvest now – decrypt later" ("HNDL") theory. The situation is at least to some extent problematic because it weakens one layer of information security quite a lot, and there is no direct solution available today.

On the positive side, good information security is layered, and in general, accessing encrypted information on-site has been made really difficult with other architectural solutions. However, the situation with data in transit is different. For example, encrypted information moving on the internet can be stored and thus collected by a hostile party with reasonable effort. For this reason, it may be appropriate, alongside other continuous risk assessment, to consider what kind of information you want to move in which network.

Speed of Warfare

Earlier, the fast execution of certain optimization problems was mentioned as a possible application area for quantum computers. If this is realized, many logistical problems become easy in the sense that the best possible solution can be found quickly for them. In practical life, this means that, for example, many logistical arrangements can be optimized better than today. If this optimization can be implemented throughout the entire operation, it at best brings speed advantages to quite many things and thereby possibly enables significantly faster operation than is currently possible.

Similarly, for decisions to be made in a moment, solution templates or models can be formulated in advance, with the help of which the situation and the impact of decisions can be calculated and evaluated considerably faster and more accurately than currently. This facilitates decision-making and reduces the cognitive load on leadership.

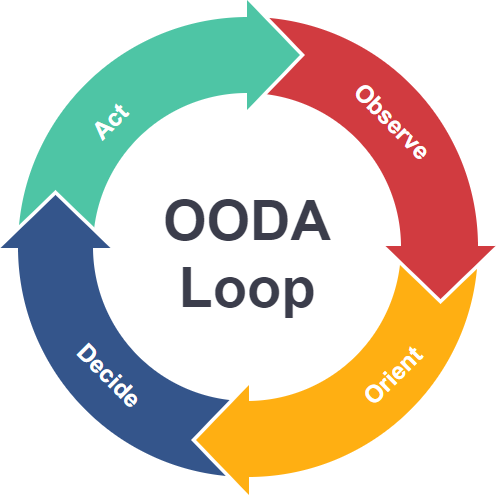

The previous points together provide tools with which the OODA loop can be accelerated as a whole and thereby bring better situational awareness to support decision-making and enable faster reaction to a changed situation. These visions combined with the possibilities of AI to support decision-making may create a war machine where the frequency of decision-making may even rise to become unmanageable for humans. The situation may challenge the basic paradigms of warfare in numerous ways.

Conclusion

Predicting the future is difficult, and after the investigation done for the article, it would seem that major breakthroughs are not expected at least in the coming years. There are still enormous issues to be solved in the technology, and the software layer will also probably live and develop several times before the actual world conquest. This brings a peaceful mind regarding threat scenarios, but at the same time leaves several useful application areas waiting for future breakthroughs.

In any case, we must be alert. And we are.